4 min read

Is the Internet Drowning in Digital (AI-Generated) Garbage?

We're drowning in digital garbage, and frankly, it's time to stop pretending otherwise. The internet has become a septic tank of AI-generated...

4 min read

Joy Youell

:

May 11, 2026 12:00:01 AM

Joy Youell

:

May 11, 2026 12:00:01 AM

-May-06-2026-05-08-13-2218-PM.png)

At the AI Agent Conference in New York, Barr Moses, CEO and Co-Founder of Monte Carlo, opened her session with a statement that reframed every conversation about enterprise AI that followed it: "The agent reliability crisis isn't coming. It's already here. It just looks like a data problem."

That framing — AI failures as data failures — was the intellectual anchor for one of the most practically useful talks of the two-day event. Moses introduced a four-layer stack for evaluating enterprise AI readiness and made the case that the companies succeeding with agents in production aren't the ones with the best models. They're the ones who built observability across all four layers from the start.

Moses led with survey data that painted an honest picture of where most organizations actually are. Nearly every engineer is experimenting with AI. Very few are running agents in production at meaningful scale. "Lack of trust is slowing deployment. The only thing stopping us is trust."

That gap between experimentation and production isn't a capability problem. The models work. The frameworks exist. The blocker is confidence — in the outputs, in the retrieval, in the pipelines feeding the system. Enterprise AI adoption is fundamentally a confidence problem, and confidence requires observability. You can't trust what you can't see.

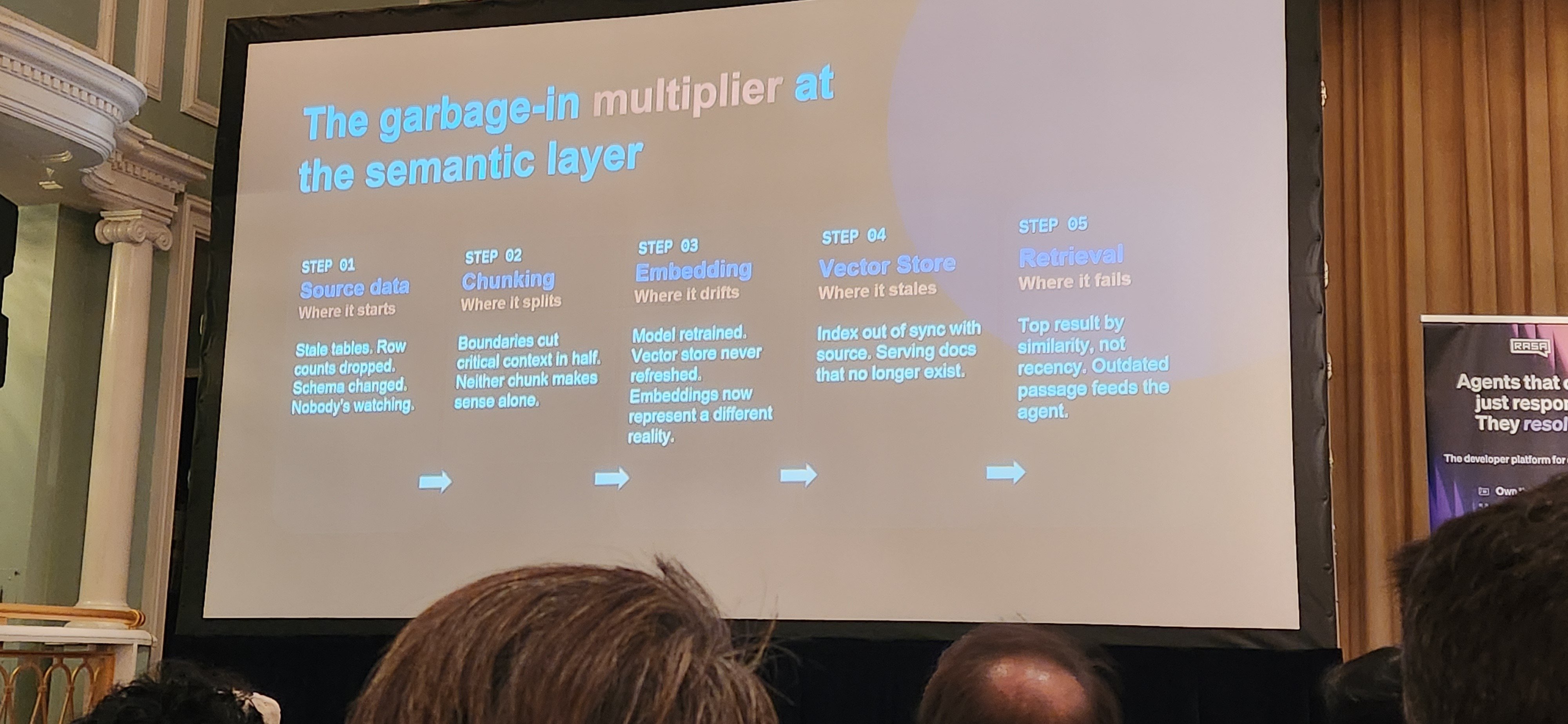

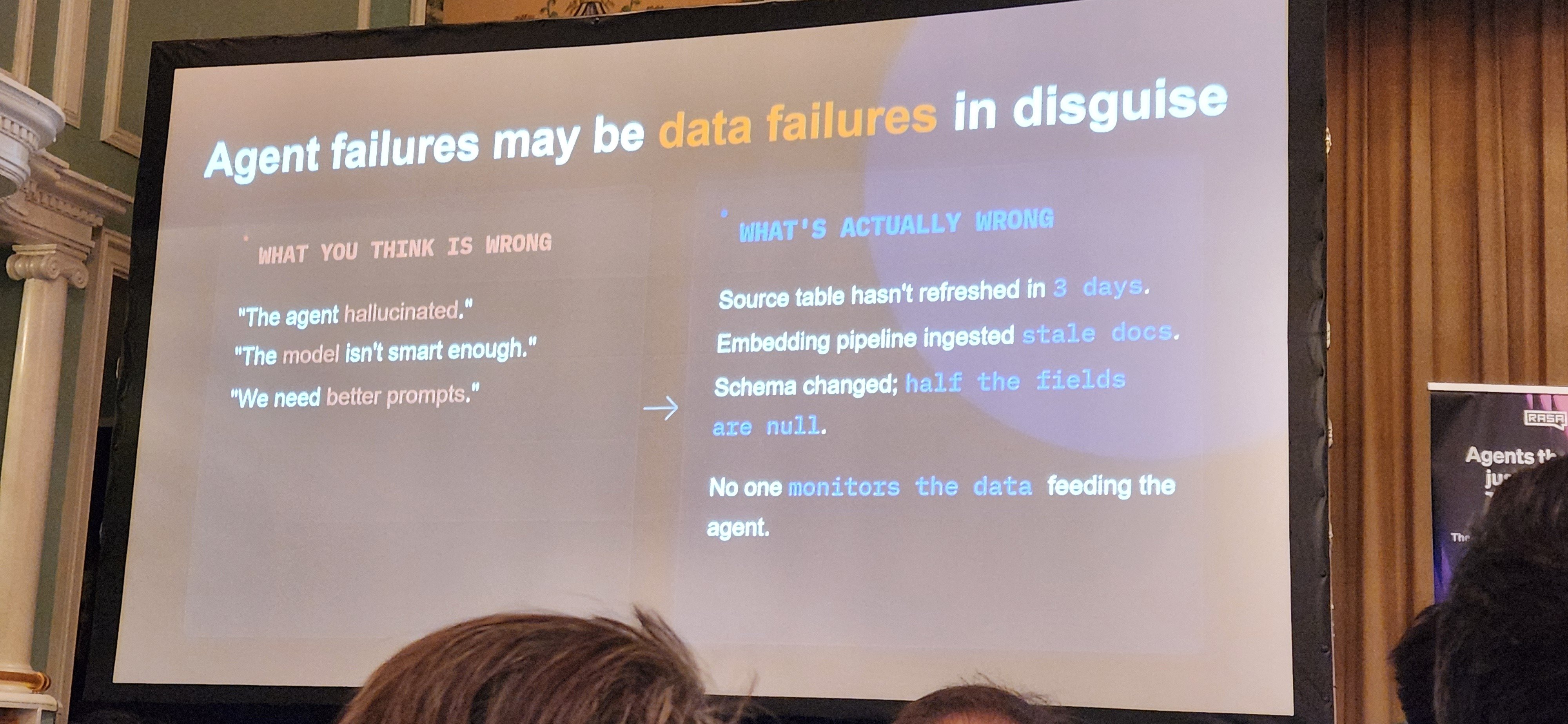

This is the insight that gives the talk its title and its teeth. When an enterprise AI system produces a wrong answer, the instinct is to look at the model, the prompt, or the agent behavior. Moses argues that in most cases, the failure originated much earlier — upstream in a data pipeline, a schema change, a stale embedding, a retrieval system that quietly degraded.

"What looks like an AI problem is often a data problem. One schema change upstream can cascade through the whole system. The failures compound downstream."

AI systems are multiplicative failure systems. A small problem at the data layer doesn't stay small. It corrupts the context layer, which corrupts the agent's reasoning, which produces outputs that are wrong in ways that are extremely difficult to trace back to their origin. By the time a human notices something is off, the failure has propagated through multiple layers and affected an unknown number of downstream interactions.

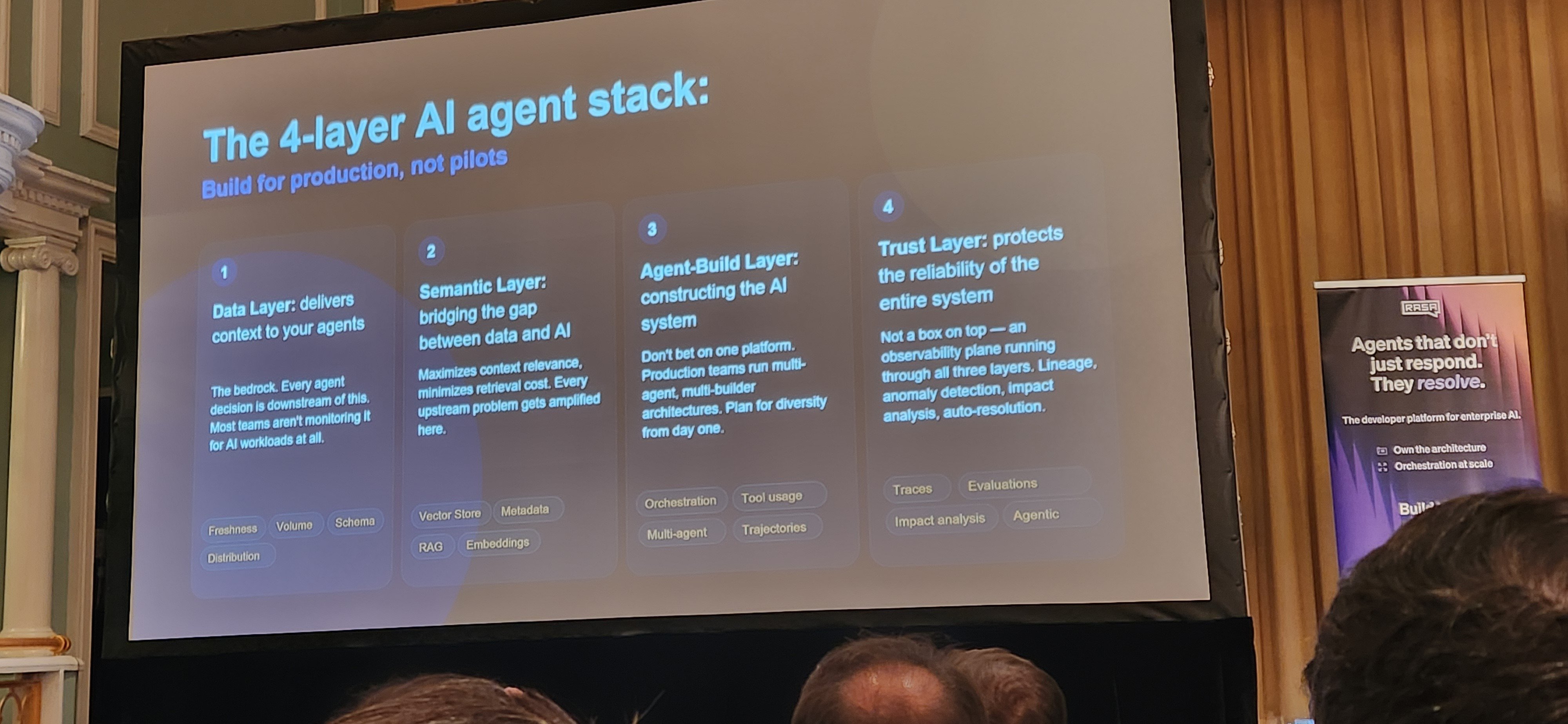

The central framework Moses introduced is worth understanding in full because it's a practical diagnostic for any enterprise AI deployment.

The Data Layer is the foundation — databases, warehouses, pipelines, source systems. This is where data originates and where the most consequential failures begin. Schema drift, pipeline outages, stale records, silent upstream failures. The question this layer has to answer: is the data the agent will act on accurate, current, and complete?

The Context Layer is retrieval and semantics — embeddings, vector stores, retrieval systems, semantic indexing. This is where data gets transformed into what agents can actually use. The question here: is the agent pulling the right information? Retrieval drift — embeddings degrading over time as source data changes — is one of the most common and least monitored failure modes in production AI systems.

The Agent Layer is where the agent itself operates — decision making, orchestration, reasoning, tool usage. This is the layer most organizations focus on. Moses' argument is that problems here are frequently symptoms of problems in the layers below. An agent behaving irrationally is often retrieving corrupted context from a degraded context layer, which is itself downstream of a data pipeline failure.

The Trust Layer is observability — tracing, monitoring, evaluation, verification, automated remediation. This is what makes the other three layers auditable and improvable. Without it, you're flying blind. "Observability creates trust. People trust systems when they can see what happened."

Moses demonstrated full-stack agent tracing — following a failure from the agent output back through retrieval, through embeddings, all the way to an upstream Airflow job that had silently failed. "You need to trace all the way upstream. The issue usually starts earlier than you think."

The scale implication is significant. Automated detection found the failure in 12 seconds. Human review took weeks. As organizations move from running dozens of agents to running thousands — which Moses predicted is coming quickly — manual monitoring becomes operationally impossible. AI observability has to become AI-powered itself.

One of the more organizationally significant arguments of the session. Enterprise AI has historically been built by AI teams working separately from data teams. Moses argued that separation is no longer viable. "Data and AI systems are intertwined. They must be viewed as one system."

The data pipeline is the AI system. Failures in one are failures in the other. Organizations still running separate data operations and AI operations are creating structural blind spots that will produce the exact failure modes Moses described — upstream problems that silently propagate through the AI stack until something breaks visibly downstream.

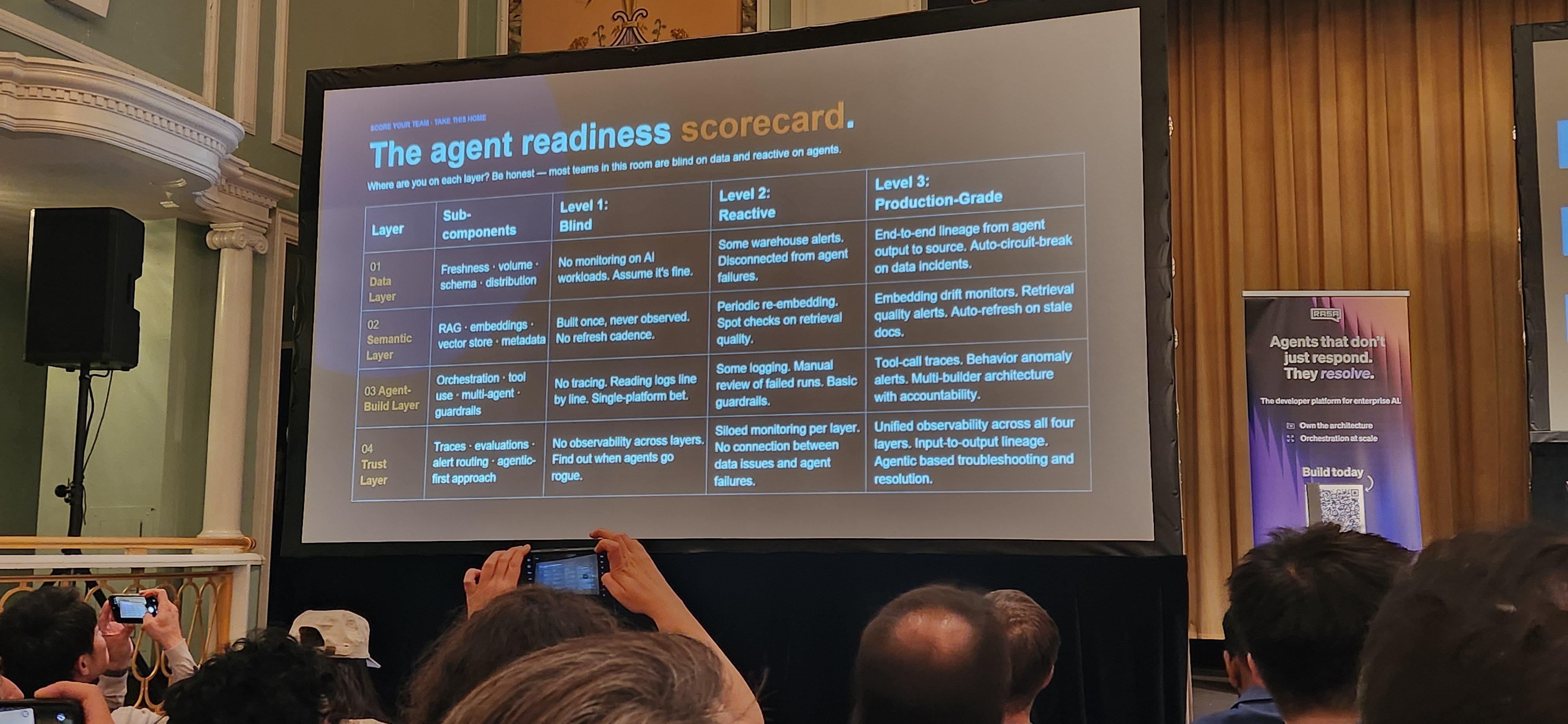

Moses presented a maturity framework running from reactive debugging through automated observability to autonomous remediation. Her assessment of where most enterprises currently sit: "Most organizations are still level one." Reactive. Finding out about failures after they've already affected users, outputs, and decisions.

The path forward requires building observability infrastructure before it's needed, not after production failures force the issue. The organizations that do this early build a compounding advantage: every failure they catch and trace makes the system more reliable and more trustworthy over time.

Moses closed with the strategic implication that ran through the entire session. "The winners aren't the companies with the best models. They're the companies with the best observability."

Model access is a commodity. Every organization can reach frontier models through an API. The ability to run those models reliably at enterprise scale — with full-stack tracing, automated failure detection, and the organizational confidence that comes from genuine production visibility — is not a commodity. It's built over time, it compounds, and it's hard to replicate quickly.

Trust infrastructure is the moat.

Barr Moses presented at the AI Agent Conference 2026 in New York. She is CEO and Co-Founder of Monte Carlo.

4 min read

We're drowning in digital garbage, and frankly, it's time to stop pretending otherwise. The internet has become a septic tank of AI-generated...

The U.S. Department of Health and Human Services just announced a $2 million prize for AI tools that will help caregivers manage the crushing burden...

This weekend, Musk announced plans for "Baby Grok," which he describes as "an app dedicated to kid-friendly content" under his xAI company. The...